After my gathering of Disavow Experts in a previous post, I wanted to go more in depth. I knew who I should talk to, Modestos Siotos, of iCrossing UK and one of cognitiveSEO’s long term customers who is specialized in ultra advanced link audits and penalty recoveries. So I got together a few questions to really get stuck into things.

After my gathering of Disavow Experts in a previous post, I wanted to go more in depth. I knew who I should talk to, Modestos Siotos, of iCrossing UK and one of cognitiveSEO’s long term customers who is specialized in ultra advanced link audits and penalty recoveries. So I got together a few questions to really get stuck into things.

Before we go hardcore into Penguin and Disavow and all that good stuff, could you explain what Penguin is.

In a nutshell, Penguin is a collection of signals Google uses to figure out to what extent a site’s backlink profile is ‘unnatural’.

If the ‘unnaturalness’ exceeds a certain threshold, Penguin will not only devalue the ‘unnatural’ links (so they don’t positively impact on rankings) but will also give a big hit to the keywords (and/or pages) the ‘unnatural’ links are pointing to.

Leaving aside Google’s brief official announcement and ambiguous hangouts, I’d say that in reality we still know very little about Penguin.

Surely, it was a very big update that significantly changed the rules of the game as it completely re-defined link building as we knew it up until Penguin came out. It definitely hit some businesses really hard, especially the smaller ones with lower budgets that up until then could outrank some of the more established brands (that had much bigger SEO budgets) just by investing their small budgets in grey-hat large-scale link building activities. Penguin has certainly put an end to this – small companies that don’t have a long-term content strategy, nor a decent budget for creative, outreach and PR activities really struggle to perform well in organic search, especially if they operate in competitive niches.

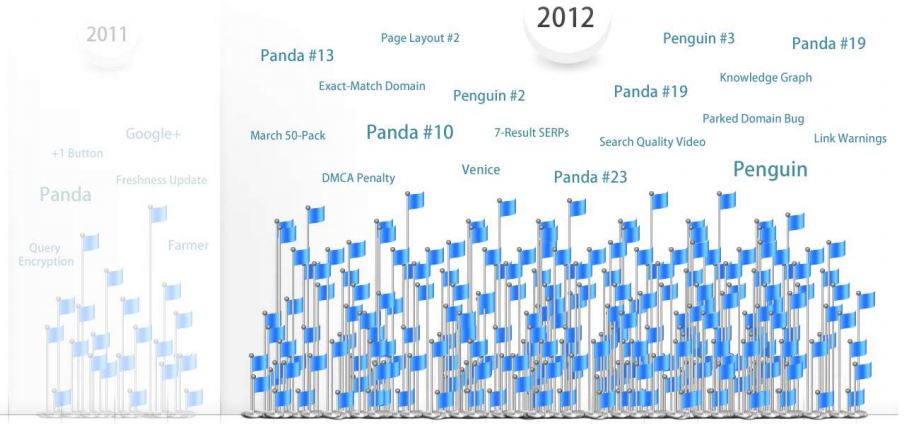

Surely Penguin has evolved a lot since 2012, when it was first released, and we do know a great deal about how to avoid it, or what needs to be done to recover from it. But at the same time we don’t really know exactly which link valuation signals are part of it, what additional signals are being added each time it gets updated, how it may be affecting rankings etc. It’s quite annoying to see SEOs writing best practice posts about the latest Penguin update just a couple of days after an update has been released, without having any empirical or other evidence. Penguin 3.0 was a great example where people were expecting a big update that would have a significant impact in the SERPs but it proved to be just refresh that only allowed some sites to recover.

Up until Penguin came out, Google was still using several signals to evaluate links and link devaluations were still taking place if the algorithm would spot a strong pattern of unnatural linking on a site’s backlink profile. However, a lot of spam was still going undetected and ‘unnatural’ link building was the bread and butter of many SEO agencies as it was fairly easy to avoid being caught up by the algorithm. Google was preparing Penguin for quite some time – I remember Matt Cutts announcing it several months before it was actually launched but not many SEOs took him seriously as he had been warning the industry’s link building practices for years.

However, unlike what many SEOs say, I don’t think that Penguin hits sites only on the dates it gets updated or refreshed.

We have no idea how often it gets refreshed, nor how long it takes for an update (or a refresh) to get fully rolled out and I wouldn’t rely on Google’s reps comments to confirm whether a Penguin update (or refresh) has taken place or not. With Penguin expected to be added into the main algorithm we will sooner or later be totally in the dark as Google doesn’t comment on updates that are part of the main algorithm.

The only tools at our disposal to make a good guess about when a Penguin update or refresh may have taken place is by keeping a close eye to the various Google SERP volatility tools. And of course one’s gut feeling and own experience is also important, regardless of the industry’s ‘noise’.

Do you think there is evidence that Penguin has improved the Google search experience?

With Penguin, Google has managed to get rid of a lot of spam that used to take up a great part of the web. I’m talking about tones of websites that solely existed for automated link building purposes such as blog networks and content syndication sites. I’d say that back in 2012 more than half of the sites Google was crawling and indexing were pure spam sites that only existed to manipulate Google’s link graph.

A few years after Penguin and Panda were released Google has to crawl a much cleaner web with less ‘unnatural’ links. Most of those spam sites and blog networks did eventually shut down. I’d say that overall Penguin has improved the Google search experience but the only evidence I have is my personal experience.

I certainly see much less ‘churn and burn’ sites ranking high these days for competitive terms or sites that rank well but offer low quality products or services, or are just untrustworthy.

It makes little surprise that for the most competitive queries the majority of page one SERPs (at least here in the UK) come from fairly recognisable brands. This was certainly not the case 3-4 years ago.

Does the Panda Algorithm have anything at all to do with the disavow tool, what is it exactly?

No, Panda has nothing to do with the disavow tool. Panda’s focus is on evaluating a site’s quality based on onsite factors such as content, structure, design and layout.

If a site has been hit by Panda, using the disavow tool won’t help recovering.

In this case, the focus should be on identifying, which parts of the site are of low quality and try to address them, either by improving the low quality pages or by removing them. With Panda, the less crap you have on your site, the better.

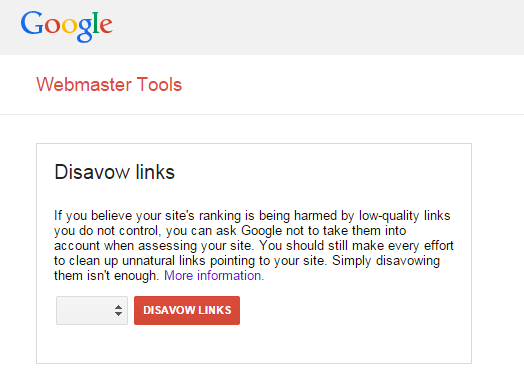

So what is the Disavow Tool and when should you use it?

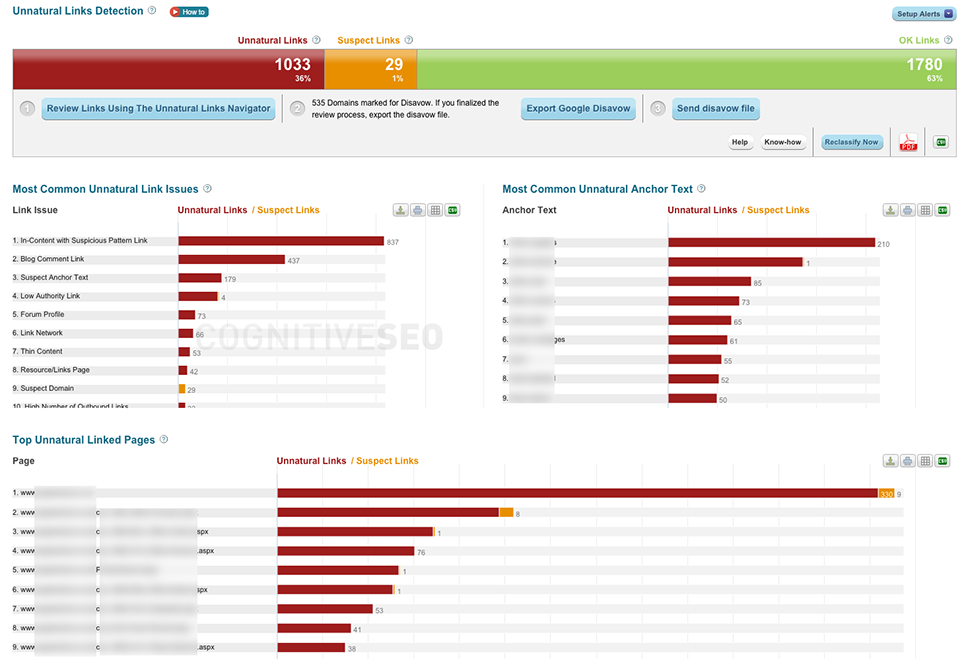

It is a tool to use if you need to clean-up a site’s backlink profile, without physically removing the links. It is a tool that has multiple uses such as:

- Trying to remove a manual action. In this case, you should try to remove as many unnatural links as possible. However, the ones that won’t be successfully removed need to be added into the disavow file before filing a reconsideration request.

- Trying to recover from Penguin. In this case, all unnatural links should be disavowed so that in the next Penguin refresh Google discounts them and the site makes some recovery.

- Risk mitigation. Disavowing unnatural and low quality links on a regular basis should be part of the standard SEO activities these days. Not only there will be much less risk to suffer from a future manual penalty or Penguin but a site could even gain ranking positions from future algorithmic updates.

- Negative SEO. Whenever a site falls victim of negative SEO all unnatural links should be added into the disavow file, so they do not pass any negative value to the targeted website.

Can you explain in easy terms the Disavow process

Technically speaking, the term ‘disavow process’ isn’t correct. However, many people commonly use it in the context of the four common ways the disavow tool is being used, which I have already covered in the previous question.

So, before using the disavow tool you need to be 100% clear on what you are trying to achieve.

For instance, the disavow tool can help protecting a site from a negative SEO attack that has already taken place. But when a manual penalty is in place, just using the disavow tool won’t be enough. You also need to file a reconsideration request, explain to Google which actions you have taken to address the unnatural links, which links you have successfully removed, share some evidence of all actions you’ve taken and plead guilty.

In strictly technical terms, you should try to disavow either at domain or subdomain level.

Disavowing just the URL of the page the unnatural link is found on is a bad practice because the same page is very likely to also exist under one or more different URLs (duplicate pages).

Similarly, disavowing at subdomain level also has some disadvantages. So let’s’ say you disavow the subdomain www.toxicsite.com but toxicsite.com is also available and contains exactly the same content and toxic links as those on www.toxicsite.com does. In this case, Google will only discount the links from www.toxicsite.com but those appearing on toxicsite.com will still be causing harm to your site. In this case disavowing toxicsite.com would have addressed all links appearing on toxicsite.com and www.toxicsite.com .

Furthermore, there are some situations were disavowing at subdomain level would be ideal. Let’s say you find a toxic link on toxicsite.blogspot.com. In this case, disavowing the entire blogspot.com domain would also discount all links from all blogspot subdomains, potentially including others that are linking with natural links. In this case, disavowing at subdomain level would be the most appropriate solution.

It seems there are some disagreements about whether you need to have bad links removed as well as disavowing them. A good friend of mine who is a hardcore SEO told me that Google does want to see some removals, however this opinion is not shared amongst the SEO tribe. Where do you stand on this?

Google’s stance on this is to try and remove as many links as possible to demonstrate good faith efforts when submitting a reconsideration request. They’ve also said that the disavow tool should be used as the last resort and only for these links that you tried to remove but without success.

I’ve seen manual penalties being lifted without a single link being removed by simply correctly identifying and disavowing the toxic links/domains .

There are some cases where this might be the only option to recover from a Google penalty. For instance, some types of links are simply impossible to remove, such as the ones that were generated by automated link building services. In most cases the only way to get rid of this type of links is to wait until the domains expire but who can afford waiting for so long? In these cases disavowing the toxic links is the only solution, something that Google’s manual reviewers seem to be aware of.

But if the toxic links appear mostly on guest posts and directories that that are relatively easy to contact you should put the effort and try to remove them. Not only this will increase the chances of lifting the penalty sooner and in less attempts but will also stop the site from being associated with spam in the users’ eyes. Here’s a few things to consider if you’re thinking of taking shortcuts:

- What if a link that you have disavowed but didn’t bother removing is being reported to Google’s web spam team as manipulative and triggers a thorough manual review of all your backlinks in the future?

- What if the disavow file gets accidentally removed or replaced?

- Or say your client decides to change their domain name in the future. They migrate to a new domain but forget to migrate the disavow file.

In all these cases the penalty is very likely to come back, whereas if the links have been physically removed this would be impossible. A removed link is the safest option for both the short and longer terms – period.

SEOs that take shortcuts and decide to disavow instead of taking the much more time-consuming and painful path of link removals they are just irresponsible and not looking in the longer term.

Do you have any good examples of sites that have had a penalty, who have then gone through the disavow process and bounced back in the rankings and with web traffic?

When a manual action is being lifted rankings for the previously penalised keywords do tend to bounce back.

But they almost never come back to where they used to be, which makes sense considering the number of links you had to remove to get the penalty lifted. I have only seen one case where the penalty was officially lifted but the affected keyword didn’t come back to pages 1-2 but haven’t seen any case (yet) where a site made a full recovery right after having a penalty lifted.

Can you simply resolve Penguin issues by getting better links?

In theory yes but in reality there is little point trying to resolve Penguin by just getting better links without cleaning up the toxic ones that put you into trouble at the first place.

Obviously, if Penguin has hit your site, it means that your backlinks aren’t of great quality. So if you don’t clean-up and you just focus on getting better links chances are that either you will have to wait for a very long time so you get enough good links to outweigh the effect of the toxic ones, or if you try to influence the generation of the these ‘better’ links you’ll probably put yourself into bigger trouble.

Is there a ratio between good/bad links as Google see it that is golden?

There is and there isn’t. Surely if 90% of a site’s links are good it is much less likely it will get penalised compared to a site that coinsists mostly of unnatural links.

Even if just 10% of a site’s links are unnatural and they follow an easily detectable footprint (e.g. they are all keyword-rich directory links) you could easily get a manual penalty.

I’ve seen many sites escaping Penguin even though they have a low ratio between good / bad links. But I haven’t seen many sites not receiving a manual action for the same reason. In fact, if there is something very impressive about Google as far as manipulative link building is concerned, isn’t the Penguin update by the amount of sites that get manually reviewed and in many instances penalised. For manual actions, the ratio of total good /bad links isn’t as important, if say the majority of the most recent links are unnatural.

Is the old way of link building, which brought in the need for Penguin dead, or is there still a way of squeezing juice out of low level link building?

I don’t see low-level link-building as valuable post Penguin.

It may work in the short-term but it’s not sustainable in the longer term. I recently attended SES London where a quite known veteran SEO was talking about the power of secondary link building. The idea was that instead of generating (low quality) links to your site you should be targeting your best backlinks so they pass more value. But this isn’t the point because even if this works today, what will happen the day Penguin starts looking into the links pointing to your links? Can you afford burning out your best links? Definitely not worth the risk.

Do you think nofollowed links have a value?

Some recent tests we’ve carried out have showed that nofollow links do have value, probably more than most people in the industry think they have.

In some tests, dofollow links of certain characteristics did even have a positive impact on rankings.

What is your opinion on the once fashionable and uber trendy “Guest Posting” techniques, which now seem to smell like old, dead fish to most SEOs?

I still come across them on penalty-lifting projects I am involved with and can’t believe people still rely on them to generate links these days. But I guess some people are just not willing to change their tactics regardless of what they get back.

What would you say to someone who does not consider organic rankings of much worth and prefer to get their traffic from Google Adwords?

Make sure you are prepared to pay Google increasingly more and more money.

CPCs have been rising rapidly and almost certainly will keep rising.

Thanks Modestos for this great explanation of the disavow process and link building as you see it in 2015 and it’s very useful to learn about the process on a very in depth way.

Site Explorer

Site Explorer Keyword tool

Keyword tool Google Algorithm Changes

Google Algorithm Changes

With every minor or major update, Google sets the heart beat ticking of all the website owners, disavow tool is some consolation to help remove some bad back-links. But still content is the king, if you write great content then still Google will acknowledge and reward the efforts.